Shadow AI: Security Risks and Practical Ways to Manage Them (+Expert Advice)

Last updated:31 March 2026

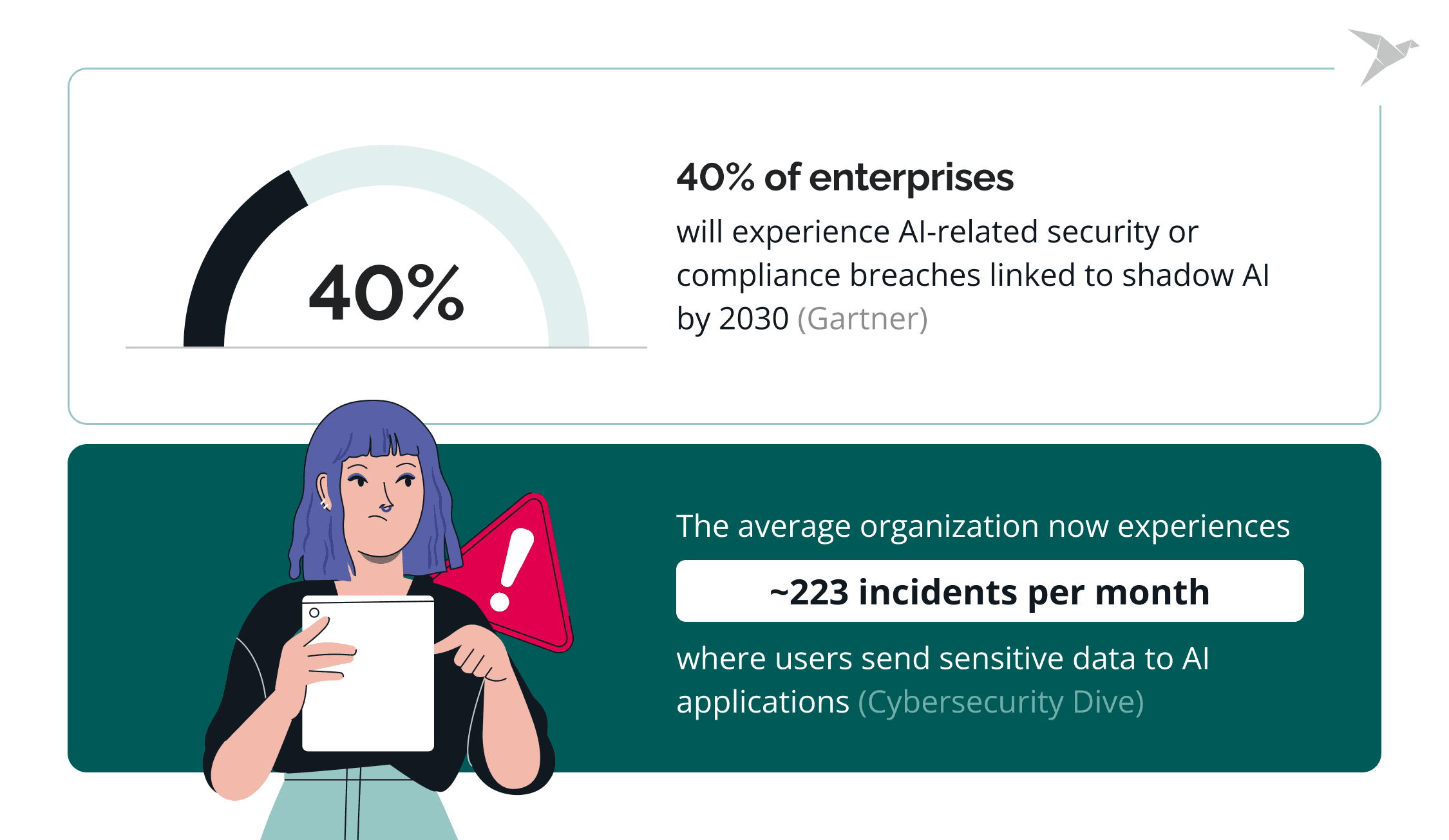

In February 2025, researchers showed that data from 20,000+ GitHub repositories that were later made private could still be surfaced via Copilot. This impacted 16,000+ organizations. That incident is a clean example of the shadow AI problem: employees adopt powerful AI tools fast, but security teams often can’t see what’s being used in the browser or what data is flowing into it.

In our new article, we discuss how shadow AI appears in modern organizations, why it poses serious security risks, and why traditional security controls often fail to detect it. We explore how unsanctioned AI tools lead to compliance exposure, data leaks, and governance gaps, and share practical ways security teams can detect and manage these risks without blocking innovation.

We also spoke with Darya Petrashka, Senior Data Scientist and Generative AI expert, who shared her perspective on the internal blind spots that drive uncontrolled AI adoption and what organizations can do to manage it more safely.

Key takeaways

- Shadow AI is already part of everyday work through browsers, SaaS AI platforms, and built-in AI-powered features used by many AI users.

- The biggest challenge is visibility, because security teams often cannot see tool usage, data access, or when employees expose sensitive data and share sensitive company data.

- The security risks of shadow AI include weak data protection, gaps in regulatory compliance, vendor risk, missing audit trails, cross-border transfer issues, and biased or misleading outputs.

- Bans alone rarely work, especially as new AI capabilities appear in common apps and image generation tools.

- A safer approach combines governance, approved use cases, clear rules, monitoring, and stronger data protection.

What Is Shadow AI?

Shadow AI is the use of generative AI tools by employees without approval, visibility, or guidance from the organization. It emerges when people adopt AI on their own to speed up daily work, outside formal governance and security processes.

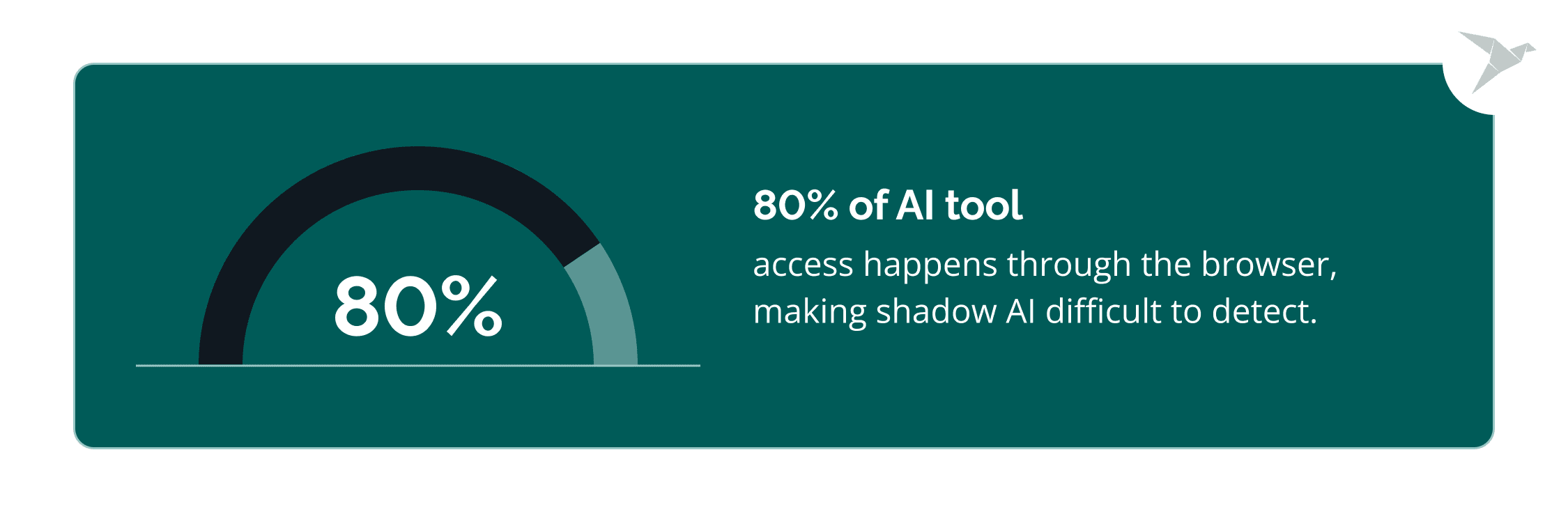

In practice, shadow AI refers to GenAI-powered tools, browser-based assistants, extensions, and AI-first browsers used independently by staff. They often operate directly in the browser, which makes them easy to adopt and hard for IT teams to detect.

A common example is an employee using a personal ChatGPT or Claude account to edit documents, analyze internal data, or work with source code. This can happen without malicious intent and without awareness of how the AI provider stores, processes, or reuses data.

A common example is an employee using a personal ChatGPT or Claude account to edit documents, analyze internal data, or work with source code. This can happen without malicious intent and without awareness of how the AI provider stores, processes, or reuses data.

The spread of agentic browsers and embedded AI assistants accelerates this trend. Employees see immediate productivity gains and adopt tools quickly, often without understanding the security, privacy, or compliance implications. In this context, shadow AI in cybersecurity describes the growing gap between how AI is actually used across teams and what security leaders can see or govern.

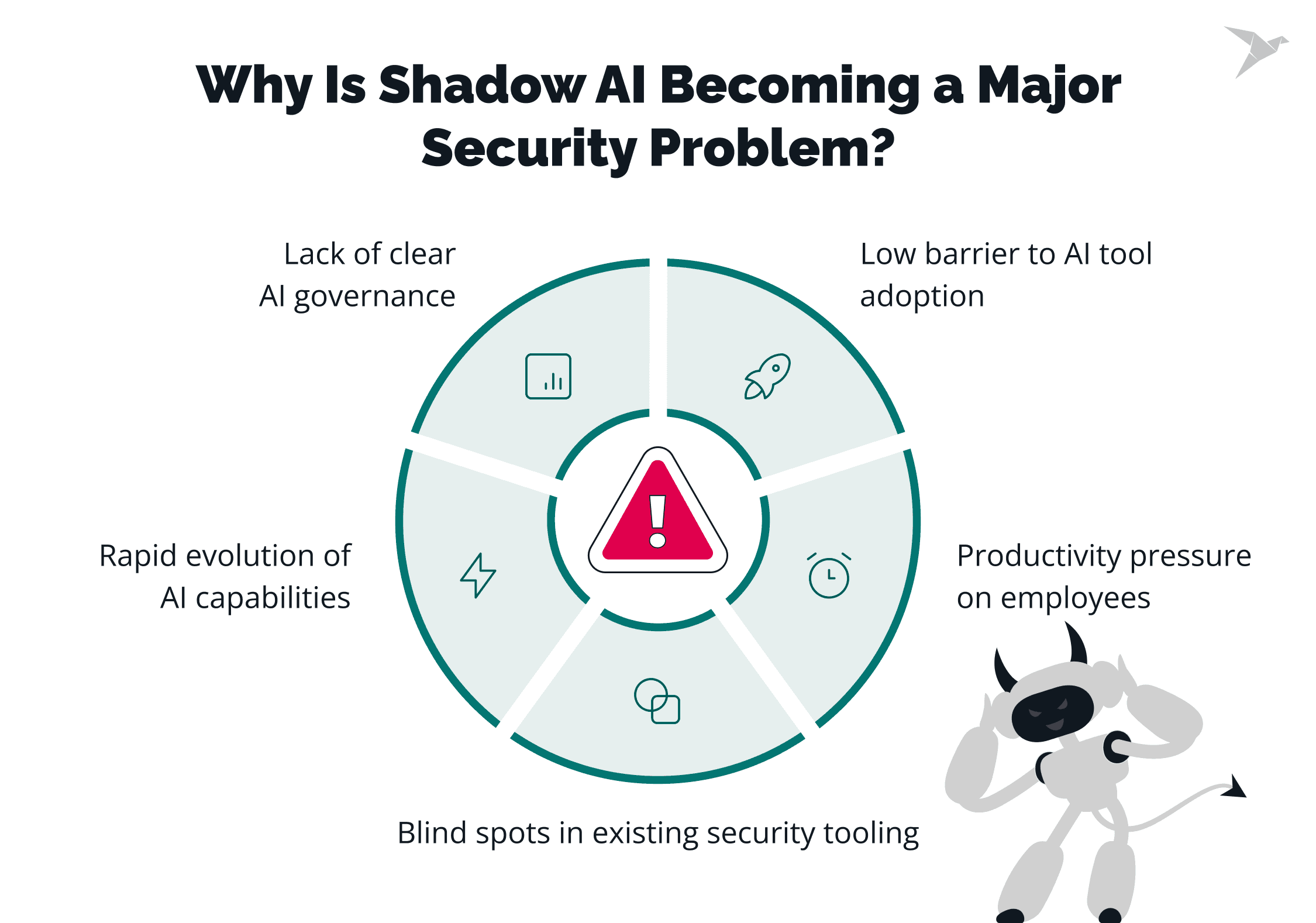

Why Is Shadow AI Becoming a Major Security Problem?

Shadow AI aligns with how people work today, while security and governance models were built for a different operating reality.

Low barrier to AI tool adoption

Most AI tools require no installation and are often free to use. Employees can start using them instantly with personal accounts, outside of IT visibility or approval.

Productivity pressure on employees

Teams are expected to deliver faster with fewer resources. AI helps draft content, analyze data, summarize dashboards, and review code. When approved tools or guidance are missing, people choose whatever helps them move forward.

Lack of clear AI governance

Many organizations have not defined which AI tools are allowed, how data can be shared, or who owns AI security risks. In that gap, individual users make decisions without a security or compliance context.

Rapid evolution of AI capabilities

AI tools now not just respond but act. Agentic browsers, assistants, and extensions can read across tabs, interact with SaaS apps, and perform multi-step actions. Existing security models are not designed for intent-based behavior.

Blind spots in existing security tooling

Traditional controls focus on endpoints, networks, and sanctioned applications. Shadow AI, instead, operates inside the browser using user-level permissions, which makes its activity hard to distinguish from normal work. This is why shadow AI in data security has become a visibility and governance problem.

Check our

Expert view: What internal blind spots most often lead to uncontrolled AI usage?

I will pinpoint 3 critical blind spots that fuel the surge in Shadow AI: the knowledge/sensitivity gap, the process/approval bottleneck, and the rise of risky autonomous systems.

The first one is that people see no harm in AI. There is a confidence paradox where the majority of employees trust unauthorized tools to protect their data, often because they mistakenly believe using a personal device or a "low-risk" tool makes it safe. Equally, they don't believe that inserting just one confidential number into an AI tool would cause a disaster. For such employees, training must shift from abstract warnings to use-case-centered scenarios that show exactly how data is leaked.

The second issue arises because employees adopt AI faster than IT can assess it. What if they are not getting what they expected? If approved tools are "behind state-of-the-art," employees feel a productivity-risk asymmetry, when the gains are immediate, but the risks are abstract and delayed.

Lastly, as we move toward agentic AI (autonomous bots performing complex tasks), the risk shifts from simple data input to a lack of human-in-the-loop oversight. These systems can damage brand reputation or commit legal errors if deployed without IT oversight.

How Does Shadow AI Create Compliance and Legal Exposure?

In the case of Shadow AI, regulated data is processed outside approved systems, controls, and audit trails.

GDPR and personal data processing risks

When employees paste personal data into AI tools, the organization becomes responsible for that processing. In many cases, there is no clear lawful basis, no transparency, and no control over how long the data is stored or reused. This directly conflicts with GDPR requirements around purpose limitation, data minimization, and accountability.

SOC 2, ISO 27001, and internal control gaps

Security and compliance frameworks rely on documented controls and consistent enforcement. Shadow AI bypasses approved tools and processes, creating gaps in access control, data handling, and change management. From an audit perspective, these activities sit outside the defined control environment.

Data residency and cross-border transfer issues

Many AI providers process data across multiple regions. When users submit sensitive information through unapproved AI tools, data may be transferred or stored in jurisdictions that violate data residency requirements or contractual obligations with customers.

Records retention and right-to-erasure challenges

Regulations such as GDPR, UK GDPR, and CCPA/CPRA require organizations to delete personal data on request or retain it only for defined periods. When data is submitted to external AI tools without tracking or contracts in place, there is no reliable way to locate, delete, or confirm how long that data is stored.

This makes it difficult to honor erasure requests, retention limits, or demonstrate compliance during audits or investigations.

Vendor and third-party risk violations

Using AI tools without vendor review introduces unassessed third parties into the data flow. This often breaks procurement rules, data processing agreements, and security addenda that require due diligence, risk assessment, and contractual safeguards.

Auditability and evidence gaps

Shadow AI activity rarely appears in standard security or access logs. If regulators, customers, or auditors ask how data was processed or who accessed it, organizations may have no evidence to provide. This lack of traceability makes compliance claims difficult to support and increases regulatory and contractual risk.

Conflict with internal data classification and handling policies

Most organizations define how confidential, restricted, or regulated data can be used. Shadow AI in data cybersecurity often bypasses these rules because users treat AI prompts as "temporary” or "private,” even when policies prohibit sharing that data with external services.

Why Do Traditional Security Policies Fail Against Shadow AI?

Traditional security policies are often written for controlled software environments, so they can't regulate adaptive, user-driven AI tools embedded in daily workflows. This creates the pool of issues.

Policies written for software, not AI behavior

Acceptable-use and IT policies usually focus on installing software, accessing systems, or storing data. AI tools operate differently. They respond to prompts, learn from context, and act on user intent. Policy language rarely covers how employees can input data into AI systems or how AI-generated output should be used.

Lack of enforcement mechanisms

Even when policies mention AI, enforcement is weak. Shadow AI runs inside browsers and SaaS apps using legitimate user permissions. From a technical standpoint, its actions look like normal work, which makes automated enforcement difficult.

Poor communication with employees

Many employees are unaware that using personal AI accounts for work can violate policy or create compliance issues. Policies are often written in abstract terms, shared once, and never tied to real examples. As a result, people do not connect policy rules to everyday AI use.

Security teams lagging behind business adoption

AI adoption moves faster than policy updates. By the time rules are written and approved, teams have already integrated AI into their workflows. This gap between governance and practice is a core challenge in shadow AI and cybersecurity.

Why blocking fails

Blocking access to a few well-known AI tools does not stop shadow AI. AI features are embedded across design, writing, analytics, and collaboration platforms. When one tool is blocked, users switch to another or use personal devices and accounts. The result is less visibility and more risks.

Some organizations are taking a different approach. Instead of trying to stop AI use entirely, they focus on understanding how AI is actually used and guiding employees toward safer, approved practices.

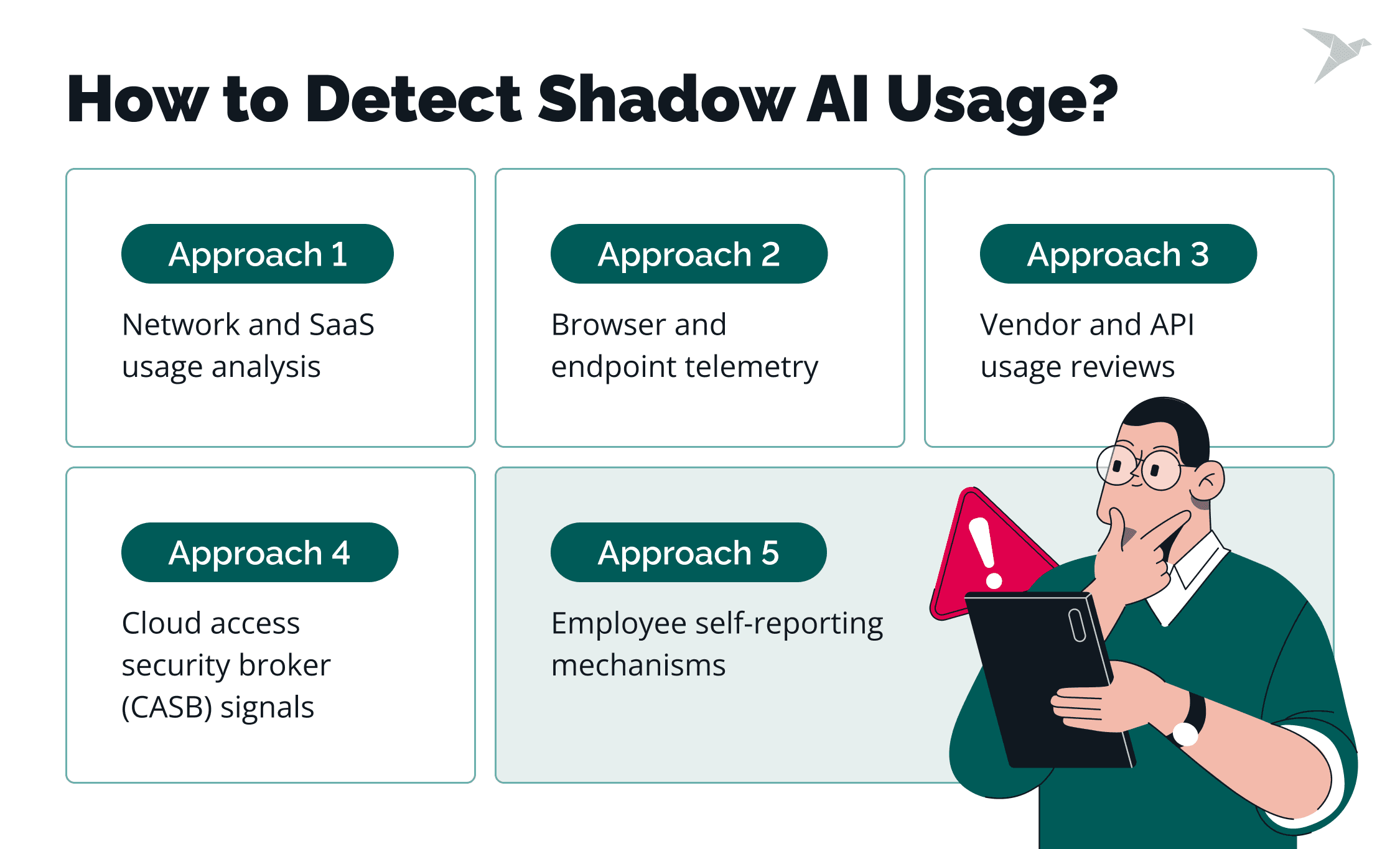

How Can Organizations Detect Shadow AI Usage?

Shadow AI rarely looks the same twice. Unlike shadow IT, it does not always appear as a clearly unauthorized app or service. Sometimes it shows up as a pattern: reports that appear unusually fast, or AI suggestions inside tools no one reviewed. Sometimes it looks like technical noise, such as a steady rise in traffic to domains your teams do not normally use.

You may also see personal AI accounts connected to work workflows, or a browser extension asking for broader permissions than before. None of these signals confirms unapproved AI use on its own, but they often point to unmanaged use of AI tools.

If you cannot clearly explain where AI is used, by whom, and for what tasks across teams, that lack of visibility is usually the first signal. To gain visibility, organizations need to combine technical monitoring with a simple way for employees to disclose new tools and workflows.

Network and SaaS usage analysis

Review outbound traffic and SaaS discovery data to identify AI-related destinations and features in tools you already allow. Look for:

- New or rising traffic to AI domains and model endpoints.

- AI features enabled inside common SaaS platforms (writing assistants, meeting notes, design tools).

- Sign-in patterns that suggest personal accounts used for work tasks

Browser and endpoint telemetry

Shadow AI often lives in the browser, so browser and endpoint signals are a practical starting point. Focus on:

- Browser extension inventories and permission changes (for example, "read and change data on all websites”).

- New AI-first browsers or assistants being installed.

- Clipboard, upload, and download patterns that align with "copy-paste into AI” workflows.

- Endpoint process and DNS telemetry that shows new AI tooling usage.

Cloud access security broker (CASB) signals

CASB and SSE platforms can help surface unsanctioned apps and risky data paths. Useful signals include:

- Newly discovered AI apps and categories.

- Data uploads to unapproved services.

- "High-risk” cloud apps based on vendor posture or missing controls.

- Policy hits tied to sensitive data types (PII, source code, financial exports)

Vendor and API usage reviews

Shadow AI is not only browser chat. It also appears as API calls, plugins, and unofficial integrations. Build a lightweight review around:

- New API keys created for LLM providers.

- Unexpected spend or usage spikes for AI services.

- Plugins or connectors added to tools like ticketing, CRM, or documentation platforms.

- OAuth grants to third-party AI apps that request broad scopes.

Employee self-reporting mechanisms

You’ll find more Shadow AI by asking than by guessing. Make it easy for teams to tell you what they’re trying, and why:

- Short, recurring check-ins with team leads ("What AI tools are you using this month?”).

- A simple intake form to request or disclose tools (with fast turnaround).

- Clear examples of what should be reported (personal accounts, extensions, embedded AI features).

- A "no penalty for disclosure” message, paired with a path to approved alternatives

A practical rule: start with a small set of conversations, then validate what you learn using logs and telemetry. That combination usually surfaces the real hotspots faster than broad monitoring.

Penetration testing of a cloud-native hospital management system before the annual ISO 27001 audit

What Are the Most Effective Ways to Manage Shadow AI Risks?

There is no sense in trying to stop AI adoption altogether. Your goal is to enabling safe use. The most effective approaches combine clarity, structure, and ongoing visibility.

Defining approved AI tools and use cases

Start by naming which AI tools are allowed and what they can be used for. Approval should be tied to concrete use cases, not broad tool categories. This helps employees understand where AI fits into their work and removes the need to guess or work around policy.

Data classification and usage boundaries

Clear data boundaries matter more than long tool lists. Define which data types must never be shared with external AI services, such as regulated personal data, customer records, or proprietary code. Tie these rules to real workflows so employees can apply them in practice.

Secure AI access patterns

Provide safer ways to access AI where possible. This can include enterprise accounts, managed browser environments, or controlled API access. When secure options exist, employees are less likely to rely on personal accounts or unmanaged tools.

Secure AI use in DevSecOps and CI/CD pipelines

For engineering teams, Shadow AI often appears through code assistants, AI-driven testing tools, or direct API calls to external models. To manage these risks, you need to define where AI-generated code is allowed, how prompts are handled, and how outputs are reviewed before entering repositories or pipelines.

Vendor risk assessments for AI providers

Treat AI vendors like any other third party that processes data. Review how they store inputs, whether data is retained or reused, and where processing takes place. Document these decisions so security, legal, and compliance teams share the same understanding of risk.

Monitoring and continuous improvement

Shadow AI changes quickly. Monitor usage patterns, revisit approvals, and reassess tools as features evolve. Regular reviews help catch new risks early and prevent approved tools from becoming blind spots.

Shared ownership between security and the business

Effective management requires partnership. Security teams define guardrails, but business teams explain how AI is actually used. Involving engineering, legal, privacy, and a cybersecurity specialist in these discussions keeps controls practical and aligned with real work.

What Role Do Security Leaders Play in Controlling Shadow AI?

Security leaders turn fragmented Shadow Artificial Intelligence use into a managed, understood risk that the organization can make informed decisions about.

Translating AI risk for executives

CISOs and vCISOs need to explain shadow AI in business terms. That means connecting unauthorized AI usage to data security risks, compliance exposure, and risk and business impact, rather than focusing on model internals or tooling details. Clear framing helps executives understand trade-offs and shape practical AI adoption strategies.

Aligning legal, IT, and engineering teams

Shadow AI cuts across traditional boundaries. Security leaders are often the only group positioned to bring legal, privacy, IT, and engineering into the same conversation. Alignment ensures that policies, controls, and workflows reflect how AI-powered tools and other forms of AI technology are actually used, including across external AI platforms.

Setting realistic risk tolerance

Zero use is rarely realistic. Security leaders help define where AI use is acceptable, where it is restricted, and where it is prohibited. This shared understanding allows teams to innovate while still knowing when to prioritize AI solutions that meet security requirements and when generative AI models introduce too much uncertainty.

Preparing for audits and regulatory scrutiny

Auditors and regulators will ask how AI use is governed. Security leaders should ensure there is documentation, evidence of oversight, and a clear narrative that explains how AI risks are identified, assessed, and monitored over time. That includes an AI security assessment, records of how AI-generated content is reviewed when relevant, and proof that governance extends beyond approved tools alone.

Get experienced cybersecurity guidance

External Expert Perspective: How Organizations Should Really Handle Shadow AI

I believe the most common misconception is that Shadow AI is just another version of Shadow IT. Indeed, we don't have much historical data to confidently map actions to consequences.

I believe the most common misconception is that Shadow AI is just another version of Shadow IT. Indeed, we don't have much historical data to confidently map actions to consequences.

For example, we all know what might happen if a vulnerable package is used in the company's software. We've seen many such scenarios. With Shadow AI, it is different. We have not seen this a lot. We understand the riskiness of the situation, but our vision of the consequences is blurry.

In reality, Shadow AI is a uniquely dangerous category because AI systems actively ingest and potentially use sensitive data to train future models, often without audit trails or data-handling agreements.

What measures actually work in practice

I would say that a successful approach is blended and risk-proportionate. Instead of just blocking, use passive log-based detection to see what tools are actually in use without inspecting raw prompt content. Create a Model Inventory and use an obligations-to-evidence mapping. This means every AI tool has a clear owner and documented risk acceptance.

Finally, make your employees' education engaging. Make it relevant and appealing. Let's use a safety training analogy. If you repeat the word “fire” 100 times, it wouldn't be as effective as showing videos and using simulated fire situations. Wrap guidelines into real-world use cases (like the Samsung code leak case) to make the consequences tangible. This builds a speak-up culture rather than a culture of concealment

Pro advice for security leaders

On the reactive side, stay updated on the AI supply chain. The next big threat may come from an auto-enabled feature in a sanctioned SaaS tool you already use.

On the proactive side, foster psychological safety. If employees feel that using AI makes them look "less capable," they will hide it. Leaders must normalize AI use publicly and provide clear guardrails so employees feel safe enough to learn in public.

Final Thoughts

Shadow AI reflects a deeper shift in how work happens. Employees already use AI to move faster, analyze information, and automate routine tasks. The main challenge for organizations is whether its use is visible, governed, and secure.

Microsoft found that 71% of UK employees have used unapproved consumer AI tools at work, and 51% do so every week. A 2025 Gallup workforce survey states that about 23% of employees use AI tools at work at least a few times per week, while daily use has grown to 10%, up significantly from previous years.

Blocking a few chatbots won’t keep up with that reality. Shadow AI lives in browsers, extensions, and embedded features across SaaS and often uses legitimate user permissions, with limited audit trails. For security leaders, the bigger concern is visibility. Gartner reports that 69% of cybersecurity leaders either have evidence or suspect that employees are already using public generative AI tools in their work environment, often outside formal governance.

I guess that governance will continue to struggle with the pace of AI adoption. The Shadow AI threats will become more tangible because breaches will become more common, and the risk will no longer be abstract. Among organizations, we will see a shift from gatekeeper to enabler models, with companies continuing to move away from blocking tools toward managing them through "Managed AI Adoption.

So, the practical and doable path forward is to define approved use cases, set data boundaries that people can follow, provide secure access options, and keep lightweight visibility on what’s actually being used, and perform AI model penetration testing. Done well, you don’t slow teams down but help them use AI with confidence, without turning the browser into an unmanaged execution environment.

FAQ

Shadow AI in cybersecurity refers to employees using shadow AI tools for work without security approval, oversight, or governance. These unauthorized AI tools often run in browsers or SaaS apps and rely on external AI models to process company data outside established controls.

This is how shadow AI happens in day-to-day work: people adopt convenient artificial intelligence tools that create blind spots for security teams and open the door to security vulnerabilities.

Shadow AI is not illegal by default. The cybersecurity risks of shadow AI appear when AI-powered services are used in ways that violate laws, regulations, or contracts, especially when AI tools handle data without proper safeguards. In those cases, unauthorized AI use can lead to compliance failures.

Shadow IT covers any unapproved software, hardware, or services. Shadow AI is more specific and harder to detect because shadow AI systems are embedded into everyday workflows, respond to prompts, and can process sensitive data without leaving traditional audit trails. Common examples of shadow AI include public chatbots, writing assistants, meeting summarizers, or coding tools used without review.

Shadow AI cannot be fully eliminated in most organizations. Employees will continue to adopt AI tools to work more efficiently, but that creates significant risks. The goal is to manage shadow AI through visibility, clear rules, and safe alternatives rather than trying to block all usage, especially where data leakage is a concern.

TechMagic Academy

TechMagic Academy