Why Vibe Coding Projects Break in Production and How to Prevent It

Last updated:4 March 2026

AI tools can now generate working software in minutes. A founder can describe an idea, press enter, and get a prototype the same day. The speed feels revolutionary, but many teams hit the same wall a few weeks later: the code works in a demo but breaks under real-world circumstances.

AI tools can now generate working software in minutes. A founder can describe an idea, press enter, and get a prototype the same day. The speed feels revolutionary, but many teams hit the same wall a few weeks later: the code works in a demo but breaks under real-world circumstances.

The rise of AI-assisted development has made rapid experimentation easier than ever. GitHub reports that over 70% of developers already use AI coding tools, while Stack Overflow surveys show that nearly 60% of developers question the reliability of AI-generated code. This gap between speed and stability creates a new challenge for companies trying to move from prototype to production.

In our new article, we explain why fast AI-generated projects often struggle outside controlled environments. We also explore how AI coding approaches gained popularity, what risks appear when these projects scale, and why expert engineering intervention becomes necessary. Using industry experience and real-world observations, we look at how teams turn quickly generated code into reliable, production-ready systems.

Key takeaways

- AI coding tools dramatically accelerate product prototyping and early experimentation.

- Many AI-generated codebases contain hidden architectural, security, and stability issues.

- The transition from prototype to production often exposes weaknesses in automatically generated code.

- Experienced engineers play a critical role in stabilizing and restructuring AI-created systems.

- With the right technical oversight, fast AI development can evolve into reliable and scalable products.

What Is Vibe Coding and Why Teams Use It

Vibe coding is an approach to software development where AI tools generate a significant part of the code. Instead of building everything from scratch, developers describe the goal in plain language. The AI suggests functions, structures, or even full features. The developer reviews the output, adjusts it, and connects it to the rest of the system.

Tools like ChatGPT, GitHub Copilot, Claude, and Cursor are commonly used to build apps in this workflow. They help draft logic, refactor code, write tests, and solve specific technical problems. The human developer stays in control, but the AI handles much of the heavy lifting.

Teams can move quickly, experiment more freely, and ship earlier versions of a product without building a large engineering team. However, speed can hide complexity. Code generated for quick results may include known vulnerabilities or miss guardrails that prevent failures under load. As projects grow, these common issues become harder and more expensive to fix.

In short, vibe coding shifts part of the coding effort from manual writing to guided generation.

Why teams are adopting it

AI-assisted development is now mainstream. More than half of software teams report using AI tools in their daily work. The reason is clear: speed.

According to McKinsey research, high-performing teams that adopt AI thoughtfully see 16-30% improvements in productivity and time to market. That gain can mean shipping earlier, testing ideas faster, and responding to customer feedback sooner.

For startups, this can reduce early development costs. For enterprise teams, it can shorten delivery cycles. In both cases, AI support allows smaller teams to move faster without immediately expanding headcount.

Vibe coding is especially common in MVP development, prototypes, proof-of-concept projects, and internal tools. In these scenarios, learning quickly often matters more than building a perfectly optimized system from day one.

Where Vibe Coded Applications Usually Fail

Vibe-coded applications often look solid at first glance, but what we see in practice is that weaknesses tend to surface as soon as real-world pressure increases. Let’s take a look at the root causes of the issues.

Logic errors that appear only in edge cases

Most vibe-coded features work well in standard scenarios: the main flow runs, and the output looks correct. However, problems start at the edges.

AI generates code based on patterns, not a true understanding of business logic. Rare conditions, unusual inputs, or complex state changes can expose hidden flaws. These are common vibe coding risks, and the system seems reliable until real users behave in unexpected ways.

At that point, debugging AI-generated code becomes difficult. The logic may work syntactically, but the lack of clear reasoning slows investigation and fixes. That’s why AI code remediation services are important when hidden logic issues start appearing

Fragile or inconsistent architecture

AI is effective at generating pieces of functionality, and, at the same time, it is less reliable at designing long-term structures. In many projects, architectural decisions can be unplanned, naming is inconsistent, and responsibilities overlap. As new features are added quickly, the system becomes harder to maintain.

Routine updates can trigger unexpected side effects. What should be simple code debugging turns into a deep analysis because dependencies and design intent are unclear. Poor structure increases technical debt, so over time, rework costs more than the initial speed saved.

Lack of tests and validation

Testing is often reduced in fast AI-assisted builds. Surface-level checks replace structured validation, and edge cases go untested. Sometimes, error handling remains shallow. All these gaps often stay invisible until the product begins to scale.

Fixing defects after release is significantly more expensive than addressing them during development. Without strong tests, troubleshooting becomes reactive and time-consuming.

Missing security and accessibility considerations

Security and accessibility rarely emerge by default in generated output. Independent reviews of AI-generated code can find that many samples include vulnerabilities aligned with common OWASP categories. Weak validation or improper authentication logic can reach production unnoticed.

Accessibility gaps are also common. Screen reader support, keyboard navigation, and semantic structure must be intentionally designed and reviewed. Addressing these issues later often requires structured architectural review and refactoring, not small fixes.

Why do issues appear after launch?

AI-assisted development optimizes for speed in the first place, so real complexity appears later. Under real traffic, integrations, and unpredictable user behavior, hidden weaknesses surface. Teams then spend more time diagnosing and fixing issues instead of building new value.

AI can accelerate development, but it does not replace careful design, validation, or expert oversight.

Learn how we built an AI-powered recruitment assistant using OpenAI stack

The Risk Layer: Security, Compliance, and Accessibility

When AI-generated code moves from prototype to production, risk increases in ways that are not always visible at first.

Security vulnerabilities introduced by AI-generated code

AI tools don’t “think” like an attacker. They can produce code that works, yet still leaves common openings. Even small flaws like improper input validation, weak authentication flows, insecure dependencies can open the door to serious incidents. In fast-moving builds, these issues often slip through because the focus is on feature delivery, not defensive design.

The impact is expensive. IBM reports the global average cost of a data breach is $4.44M. And Gartner found 29% of cybersecurity leaders said their organizations had an attack on enterprise GenAI application infrastructure in the last 12 months.

Risks in regulated and high-risk industries

In regulated environments, security flaws quickly become compliance problems. The cost isn’t only technical fixes. It can include legal work, audits, incident response, and lost trust.

Gartner has repeatedly warned that security and compliance failures remain among the top drivers of enterprise risk. McKinsey reports that organizations operating in highly regulated sectors face growing scrutiny around software supply chain integrity and data protection controls.

When AI-generated components are introduced without strict review, traceability can suffer. Teams may struggle to document how decisions were made, how data flows through the system, or whether controls meet standards such as HIPAA, GDPR, or PCI DSS. In these environments, non-compliance is not just technical debt. It can mean fines, audit findings, and reputational damage.

Zooming out, Statista estimates the global cost of cybercrime will rise from $9.22 trillion in 2024 to $13.82 trillion by 2028. That trend is why “ship first, secure later” is a risky strategy for any high-impact system.

Accessibility gaps AI often misses

Accessibility issues are easy to overlook in AI-generated UI, especially when prompts focus on “make it look modern” instead of “make it work for everyone.”

WebAIM’s 2025 analysis found 94.8% of 1,000,000 home pages had detectable WCAG failures. On the legal side, one 2024 report counted 3,188 ADA website accessibility lawsuits filed that year.

Why these risks are expensive to fix later

These gaps get “baked in” as more features are layered on top. Fixing them later often means redesigning flows, reworking components, and re-testing everything, especially in regulated systems.

This is where vibe coding debug work can turn from a quick cleanup into a full stabilization effort, and where structured vibe coding remediation pays off.

Why AI Fixing Tools Often Create New Problems

AI tools can generate quick fixes for broken code, but speed does not always mean accuracy. Many automated fixes solve the visible symptom while leaving the deeper issue untouched.

Lack of context and accountability

AI tools work without full knowledge of the system. They don’t understand business logic, architectural decisions, or long-term product goals. When a tool suggests a patch, it usually focuses on the immediate error message rather than the broader context.

This becomes a challenge when fixing AI code that was already generated by another model. Each new automated fix can introduce slightly different logic or dependencies, making the codebase harder to understand over time.

Compounding technical debt

Quick fixes can accumulate into technical debt. Instead of addressing the design flaw behind a bug, the system receives another layer of workaround logic.

Research from the software engineering community shows that technical debt can consume 20-40% of a development team’s time. When AI-generated patches stack on top of each other, that percentage can grow even faster.

Surface fixes vs. root cause analysis

AI tools typically focus on resolving the immediate failure: a broken function, a failing test, or a runtime error. What they rarely do is perform root cause analysis.

For example, a tool might silence an exception or adjust a condition so a test passes. But the deeper issue (incorrect data flow, flawed architecture, or missing validation) remains in place. The bug disappears temporarily, then returns in a different form later.

Rising long-term costs

Short-term fixes often lead to long-term costs. Studies of software maintenance consistently show that fixing defects after release can cost 5-10 times more than resolving them during development.

When automated fixes introduce regressions or new inconsistencies, teams spend more time diagnosing problems and rewriting unstable sections of code. What looked like a quick solution becomes a longer and more expensive repair cycle.

How Vibe Code Rehabilitation Works

Stabilizing a vibe-coded application starts with understanding what is actually in the codebase. Quick fixes rarely work if the structure, dependencies, and risks are unclear.

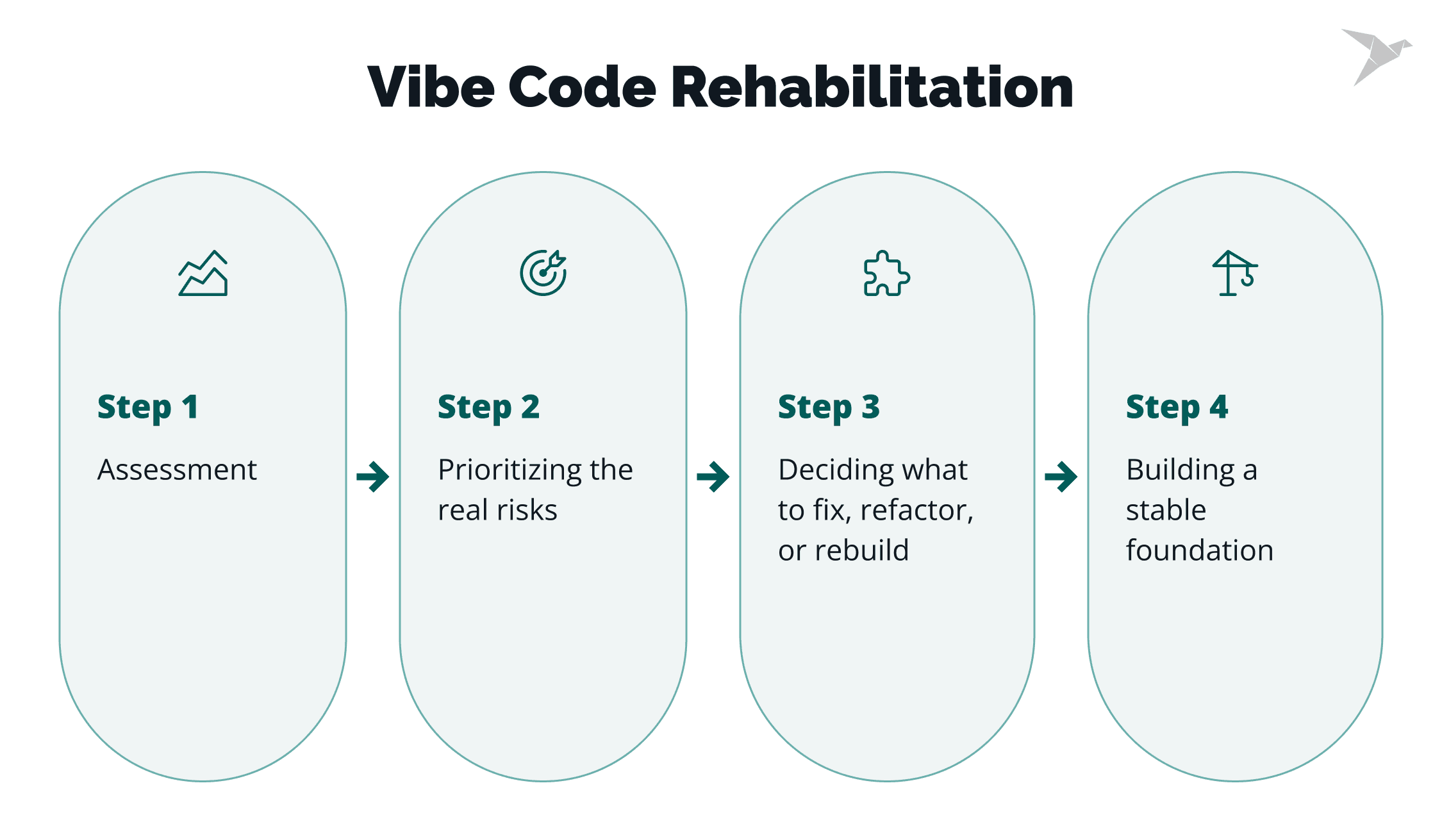

Assessment first

The first step is a structured assessment. Engineers review the architecture, dependencies, data flows, and security posture of the system. The goal is to identify where vibe coding issues appear and how they affect stability and maintainability. This stage often reveals hidden complexity: unused libraries, duplicated logic, missing validation, or fragile integrations.

Prioritizing the real risks

Not every problem needs to be fixed immediately, so after the assessment, the next step is prioritization. Teams focus first on the areas that could cause the most damage: security gaps, unstable core logic, and parts of the system that handle sensitive data or critical workflows. Addressing these risks early helps prevent outages, security incidents, and expensive rewrites later.

Deciding what to fix, refactor, or rebuild

Once risks are clear, engineers decide how each area should be handled. Some parts of the system may only need targeted fixes. Others benefit from refactoring to improve structure and clarity. In some cases, rebuilding a component is faster and safer than trying to repair unstable code.

Building a stable foundation

The final goal is to make the system reliable in the long term. That includes strengthening architecture, improving validation, providing security testing services, tightening security controls, and simplifying the code where possible.

When done properly, rehabilitation turns a fragile codebase into something teams can safely maintain, extend, and scale.

The Role of Real Experts in Fixing AI-Generated Code

Industry context is the difference. In regulated and high-risk environments, teams must meet real expectations around access control, logging, traceability, and secure data handling.

Experts who’ve worked in these settings can spot weak points early before they show up as audit findings, security incidents, or production outages. That early detection also helps teams make the right call on what to fix, refactor, or rebuild, instead of patching symptoms.

Accountability matters too. Real experts take ownership of outcomes. They validate changes, document decisions, and reduce the chance of regressions.

With TechMagic, you’re not relying on a single person. You get a team with 10+ years of software engineering experience and deep expertise across industries such as HealthTech, FinTech, Cybersecurity, and enterprise SaaS, including projects in regulated and high-risk environments. Our engineers review architecture, analyze dependencies and data flows, identify security and compliance gaps, and stabilize systems that were quickly assembled with AI tools.

This hands-on approach supports structured AI code remediation we:

- assess the codebase;

- prioritize the highest risks;

- refactor fragile components;

- strengthen security and testing;

- and rebuild unstable parts when needed.

Because we combine domain knowledge with practical engineering experience, we can detect hidden issues early and turn experimental code into a stable, maintainable product ready for real production workloads.

Business Outcomes of Proper Vibe Code Fixing

Vibe coding can help teams launch quickly, but without stabilization, the hidden cost often appears later in constant debugging, unstable releases, and partial rewrites. Proper remediation turns a fast-built prototype into a reliable system that the business can depend on.

When engineers fix AI code the right way, the result is not just fewer bugs. It improves how the product evolves and how teams work with it long term.

Key business outcomes include:

- Predictable delivery. Releases become more stable, and teams can plan development without constant emergency fixes.

- Lower long-term maintenance costs. Structured fixes reduce the cycle of patching fragile code and rewriting unstable components.

- Reduced operational risk. Security gaps, unstable integrations, and hidden logic errors are addressed before they cause incidents.

- Better scalability. A stable architecture allows teams to add features, integrate new services, and grow the product without rebuilding core systems.

Vibe coding may lower initial development costs, but organizations often spend far more later trying to stabilize unstable systems. Fixing the foundation early helps avoid that cycle and keeps the product moving forward.

Conclusion

Working code can ship fast and look convincing in a demo, but it’s not the same as production readiness. Vibe coding is a powerful tool because it helps teams move code quickly, test ideas faster, and launch early versions of products with fewer resources. But it is not a complete solution.

AI-generated code can accelerate development, yet reliability still depends on thoughtful architecture, strong validation, and security oversight. Without that foundation, quick wins often turn into long debugging time cycles, unstable releases, and expensive rebuilds. Security is a common blind spot, too: issues like SQL injection can slip in when patterns are reused without understanding the full risk.

Rehabilitation bridges this gap. It keeps the speed that vibe coding enables while restoring the stability and structure needed for real production systems. By reviewing the codebase, prioritizing risks, and strengthening the architecture, teams can turn experimental builds into reliable products.

This is especially true when AI agents are generating larger portions of the system, and the tech stack becomes harder to reason about. This work requires deep knowledge of architecture, testing, and security, plus a clear view of application context, so fixes don’t break critical flows.

Working with experienced developers makes this process far more cost-effective. Instead of months of scattered code changes and risky hotfixes, teams can stabilize with disciplined pull requests, consistent review standards, and repeatable remediation steps that address common pitfalls before they become outages.

FAQs

It can be, but usually not without additional engineering work. AI-generated output can look like functional code, yet production systems still need consistent code quality, clear architecture, strong security controls, and documentation. Many teams rely on AI coding assistants and other Vibe coding tools for early speed, but long-term stability depends on what happens after code generation.

Most issues appear only under real conditions. Edge cases, unpredictable user behavior, higher traffic, and integrations expose complex logic gaps that weren’t visible during early demos. In addition, AI suggestions can pull in outdated patterns, which makes scaling and maintenance harder once the product grows.

They can help fix errors, but they often lack full project understanding. Without the right system prompt, enough project context, and visibility into the source code, automated fixes may treat symptoms instead of root causes. This gets worse when teams mix outputs across a programming language stack without consistent conventions.

Use a structured review process. Start with a disciplined code review, then map dependencies and architecture, and write unit tests for critical flows before changing behavior. The goal is to decide what to patch, refactor, or rebuild so you can confidently create software that holds up in production, especially when integrations depend on standards like the model context protocol.

TechMagic Academy

TechMagic Academy