How to Choose the Right AI Development Partner

Last updated:2 March 2026

Seventy percent of companies are testing AI, yet fewer than one in three see real financial returns. Many teams start with excitement and end with a stalled pilot, unclear ROI, or a system that works in a demo but fails in production.

Choosing the wrong AI development partner can cost months of effort and budget, and still leave you without measurable impact. This guide helps you avoid that.

You’ll learn how to define clear outcomes before you sign a contract. We’ll break down the difference between an AI MVP and a production system, and why that choice changes everything. You’ll also see what capabilities actually matter, how to test real expertise, and which questions reveal whether a partner can deliver under pressure.

Key takeaways

- Start with a measurable business outcome. Clear goals protect you from choosing the wrong partner and help you focus on real impact, not promises.

- Look for proven expertise in building stable systems, not just experiments, especially if your scope includes advanced analytics.

- The right partner should demonstrate outcomes, lessons learned, and the ability to build future-ready solutions that evolve with your business.

- Ask direct questions about data quality, evaluation methods, and operational ownership. Strong teams answer clearly and explain trade-offs in plain language.

- Require clear security, privacy, and compliance controls before deployment. Discipline here is not optional. It’s what separates short-term wins from systems that hold up under real-world pressure.

What Should You Achieve With An AI Development Partner?

You should aim for a clear outcome you can measure, not a vague “AI initiative.” Before choosing AI development partner, decide what you’re building: an AI MVP to validate an idea, a production rollout, process automation, or an analytics uplift. Then translate that goal into concrete deliverables, so the scope stays tied to results.

AI MVP vs production system: what changes in requirements

An AI MVP helps you learn fast. The focus is speed, feasibility, and whether the data and approach work in practice. A production system must be reliable and safe. It needs stronger engineering around data flows, monitoring, security, and how the system behaves when the model is wrong.

Common AI engagement outcomes

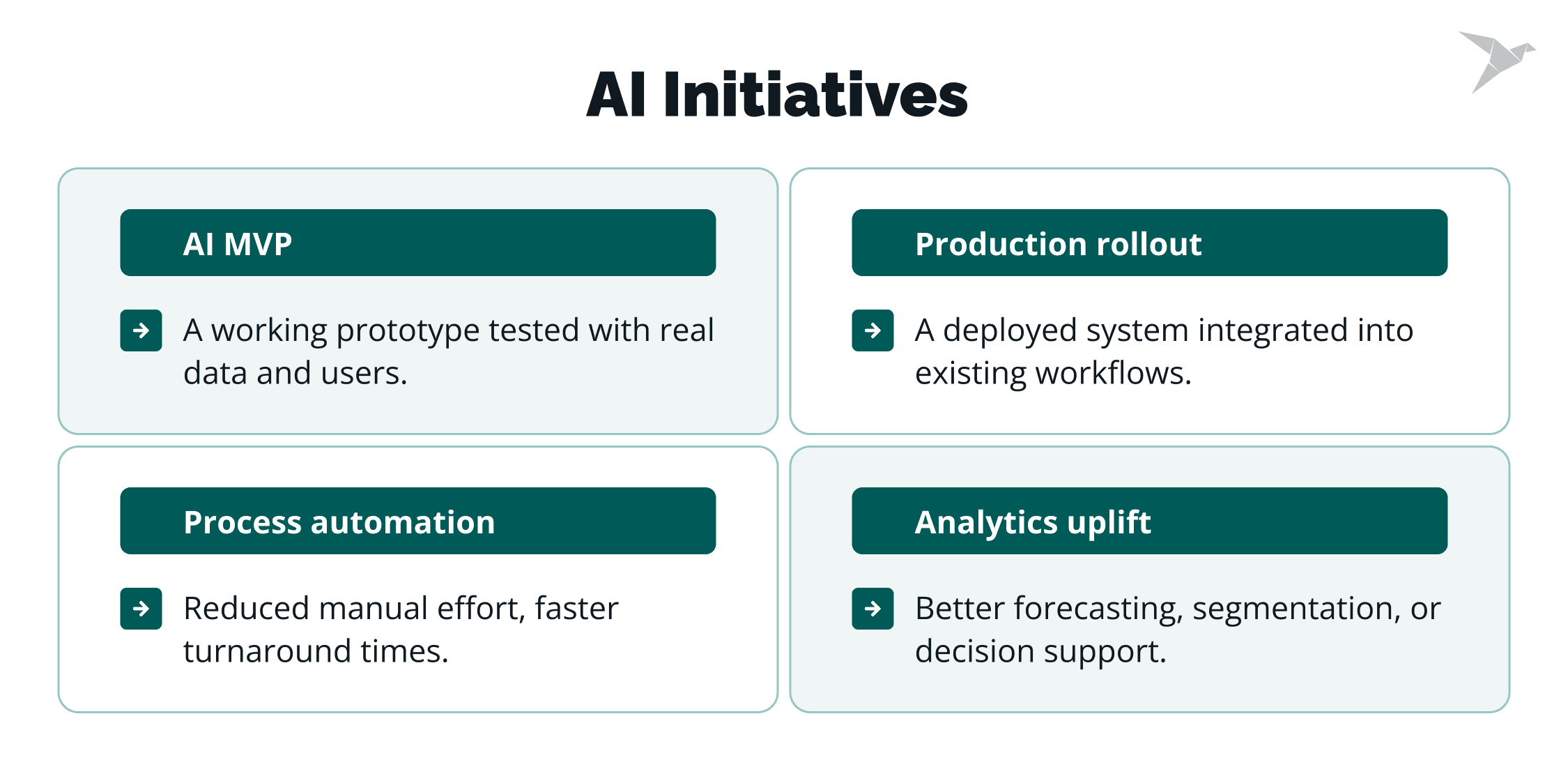

Most AI initiatives fall into a few clear categories:

- AI MVP. A working prototype tested with real data and users.

- Production rollout. A deployed system integrated into existing workflows.

- Process automation. Reduced manual effort, faster turnaround times.

- Analytics uplift. Better forecasting, segmentation, or decision support.

Each outcome leads to different deliverables. For example, process automation may require workflow redesign and system integration. Analytics uplift may require data consolidation and dashboarding. AI development services should match the intended result, not just deliver models.

Ask yourself: what will be different in the business after this project ends? If you can’t answer that in one sentence, the scope is not clear yet.

Defining acceptance criteria and ROI metrics early

Set acceptance criteria and ROI metrics at the start, even if they’re rough. Pick a small set you can track, like time saved, error rate reduced, or a specific accuracy threshold tied to a workflow. This keeps AI development services focused on impact and makes partner evaluation about delivery.

Acceptance criteria should be measurable. For example:

- Model accuracy above a defined threshold.

- Reduction in processing time by a set percentage.

- Increase in lead conversion rate.

- Fewer manual reviews per week

Tie these to financial or operational impact. That is your ROI baseline.

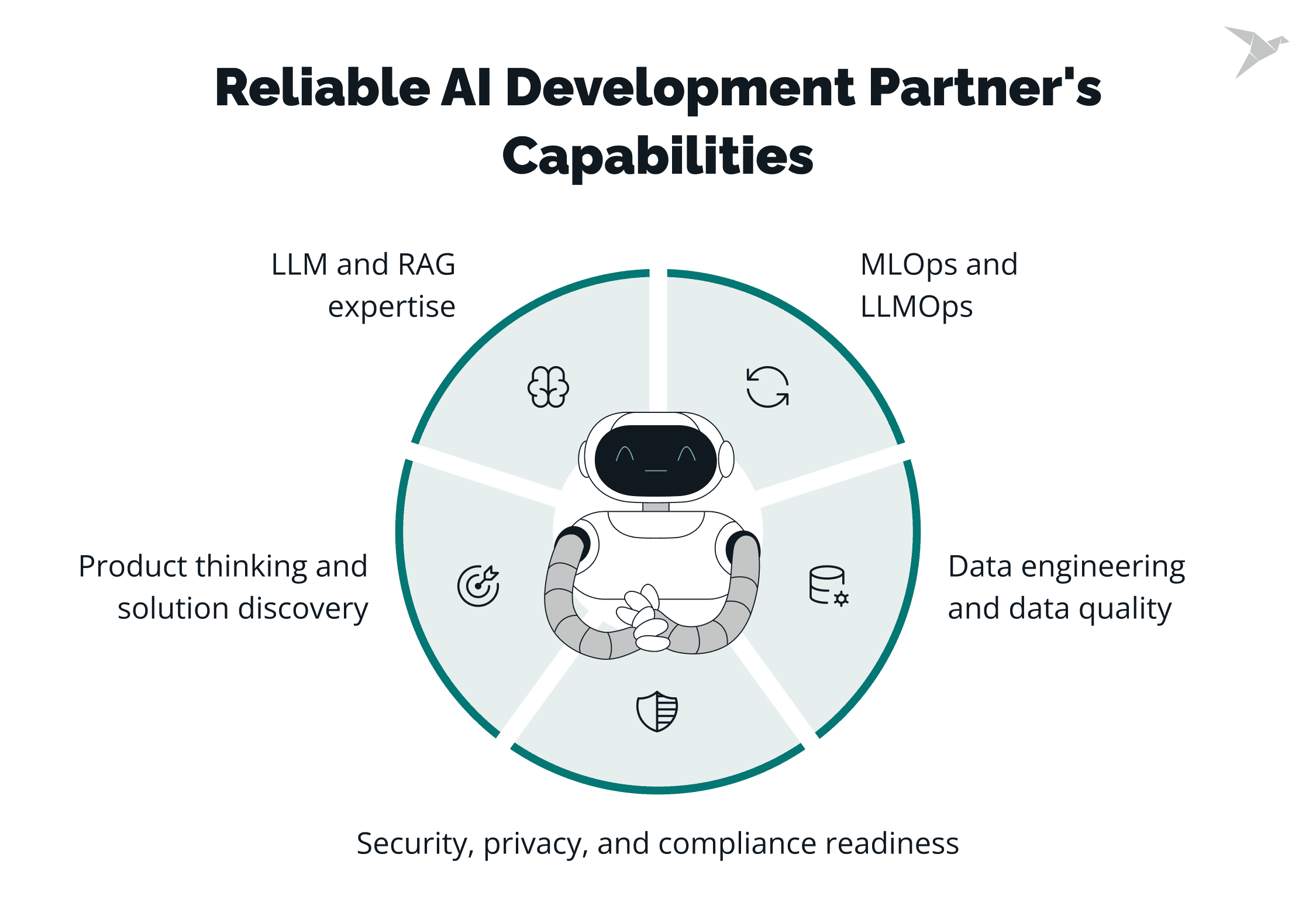

What Capabilities Must The Right AI Partner Have?

The right partner must cover the full delivery chain – the whole process from idea to stable operation. So, when thinking about how to choose an AI development partner, look beyond model demos. You need capabilities that map to real work: shaping the problem, preparing the data, building the system, deploying it, and keeping it reliable over time.

The depth of each capability depends on your goal. An MVP needs speed and experimentation, and a production system needs rigor and control.

Product thinking and solution discovery

This is a must-have for any project and a core part of successful AI implementation. A strong partner helps turn business goals into a clear scope tied to measurable business benefits.

They use business acumen to ask hard questions early: What problem are we solving? Who will use it? What does success look like? Where are the boundaries? This is especially important when adopting AI as part of a broader digital transformation.

Without product thinking, teams build technically impressive systems that miss the real need or fail to fit existing systems.

Data engineering and data quality

Data capability is always critical for AI implementation. The partner should know how to design pipelines, assess data quality, manage access, and apply governance rules that match your data architecture. If your data is fragmented or inconsistent, model performance will suffer, no matter how advanced the algorithm is.

For analytics or automation projects, this is a core requirement. For an MVP, lighter pipelines may be acceptable, but data risks must still be visible, especially when the system has to connect cleanly with existing systems.

LLM and RAG expertise

If your use case involves generative AI, LLM expertise becomes a must-have. Practical experience matters here, not hype.

This includes hands-on work with prompting, retrieval design, grounding answers in trusted data, and tool calling when the model must not only respond but act. For internal copilots or knowledge systems, retrieval quality often matters more than model size.

If your project is classic predictive modeling, strong fundamentals in Machine Learning frameworks may be more relevant than LLM depth. In either case, you want tailored solutions, not generic templates.

MLOps and LLMOps

For production systems, deployment and monitoring are non-negotiable. A capable partner should handle versioning, automated deployment, performance tracking, and model drift detection to support reliable AI implementation over time.

They should also plan for continuous improvement, not a one-time launch. For an MVP, this can be simpler. For large-scale rollout, it is a must-have to enable scalable solutions and protect long-term outcomes, especially in regulated settings where weak operations can introduce security vulnerabilities.

Security, privacy, and compliance readiness

Security and compliance are essential in regulated industries and important everywhere else. The partner should understand data access controls, logging, auditability, and privacy constraints. This becomes especially important in discussions around build vs buy AI, where data exposure and vendor risk must be evaluated carefully.

In short, match capabilities to your project type. Some skills are always required, such as product thinking and data fundamentals. Others become critical as you move from experiment to production. The key is to assess whether the partner can deliver safely, not just build quickly.

How Do You Evaluate An AI Partner’s Real Expertise?

The best tip here is to look for working proof. If you’re deciding how to choose an AI product development partner, shift the focus from presentations to delivery. Real expertise shows up in shipped systems, clear decisions, and measurable impact.

Start with case studies, but read them carefully

A strong case study explains the original problem, the constraints, the approach taken, and what changed in the business. It should include metrics that matter. Not just model accuracy, but outcomes tied to cost, time, revenue, or risk. If the story skips trade-offs or lessons learned, you’re not seeing the full picture.

Next, speak with previous clients

Ask what happened after launch. Did the system hold up in production? How did the partner respond when the data was messy or the scope shifted? Would they hire them again for a critical rollout? Specific answers tell you far more than general praise.

Then go deeper with a technical session

Bring your engineering or data leads. Ask the partner to walk through how they assess data quality, evaluate models, and design deployment and monitoring. Listen for structure and clarity. They should be able to explain complex decisions in simple terms. If they hide behind jargon, that’s a warning sign.

When possible, validate with a small pilot

A short, well-defined engagement shows how they handle your data, your standards, and your constraints. It’s the most reliable filter. Trends come and go in AI development trends. Sound engineering and disciplined delivery do not. In the end, choose based on what you can verify. Expertise is visible in process, transparency, and results.

Learn how we built a video-first hiring platform enhanced by AI tools

What Questions Should You Ask An AI Development Partner Before Signing?

Ask questions that force clear, specific answers. If you’re thinking about how to choose an AI development company, don’t ask, “Can you build this?” Ask questions that reveal how they think, how they manage risk, and how realistic their delivery plan is.

Start with your use case

Ask how they would define success for your project. What metrics would they track? What would make them say the project should stop or change direction? A mature partner won’t promise guaranteed results. They’ll talk about assumptions, risks, and trade-offs.

Move to data

Ask what they need from you in the first 30 days. How will they assess data quality? What happens if the data is incomplete or biased? Listen for a structured plan, not blind optimism.

Then ask about the model approach

Why this method and not another? What are the alternatives? How will they prevent overfitting or hallucinations? Strong teams explain choices in plain language and acknowledge limits.

Don’t skip evaluation

How will they test the system before launch? What benchmarks matter? How will they measure performance in real-world conditions, not just in a lab?

Finally, ask about operations

Who owns the system after deployment? How is monitoring handled? What triggers retraining or updates? What does failure look like and how is it handled?

Short, direct questions cut through marketing. You’re looking for clarity, structure, and honesty. If the answers feel vague, the delivery may be too.

What Security, Privacy, And Compliance Checks Should You Require?

Require controls that address how AI systems actually fail. If you’re evaluating vendors and wondering how to find an AI development company you can trust, focus on practical safeguards. AI systems tend to break in predictable ways: data leaks, prompt injection, unsafe outputs, and misconfigured access.

Start with data protection basics

Ask how data is stored, encrypted, and accessed across your cloud platforms. Who can see raw sensitive data? Is access role-based? Are logs enabled and reviewed?

You don’t need to be a security expert to ask this. You need clear answers about where your data lives, who controls it, and what ongoing maintenance looks like after go-live.

Next, address prompt injection and retrieval risks

If the system uses LLMs or retrieval (RAG), ask how it handles malicious or misleading inputs that could steer an ai model. What prevents external content from overriding trusted instructions? How do they validate retrieved documents before they shape an answer?

A strong partner will describe isolation layers, input filtering, and clear separation between system prompts and user input, supported by secure data pipelines and the right technical skills.

Then look at guardrails and output safety

How do they prevent harmful, biased, or non-compliant responses from the AI model? Are there content filters, policy checks, or human review steps for sensitive use cases?

What happens when the model produces an unsafe answer? There should be a defined fallback, not guesswork, plus clear post-deployment support and ongoing maintenance so issues are caught and fixed fast.

Finally, confirm compliance alignment

If you operate under regulations, ask how the system supports audit trails, logging, and explainability. Can decisions be traced? Can outputs be reviewed? Compliance must be built into architecture and process, not bolted on later with off-the-shelf tools or off-the-shelf solutions.

Keep it simple. You’re checking for discipline, clear data controls, and protected prompts. Defined safety rules. Traceable decisions. The right AI strategy supports your business strategy and includes reliable data pipelines, clear ownership by dedicated teams, and delivery practices like Agile methodologies.

You should also expect transparent communication and clear escalation paths during build and after launch, especially if your scope includes AI agents that act inside real workflows. If a partner can’t explain these in plain language, the risks may be larger than they appear.

Final Thoughts

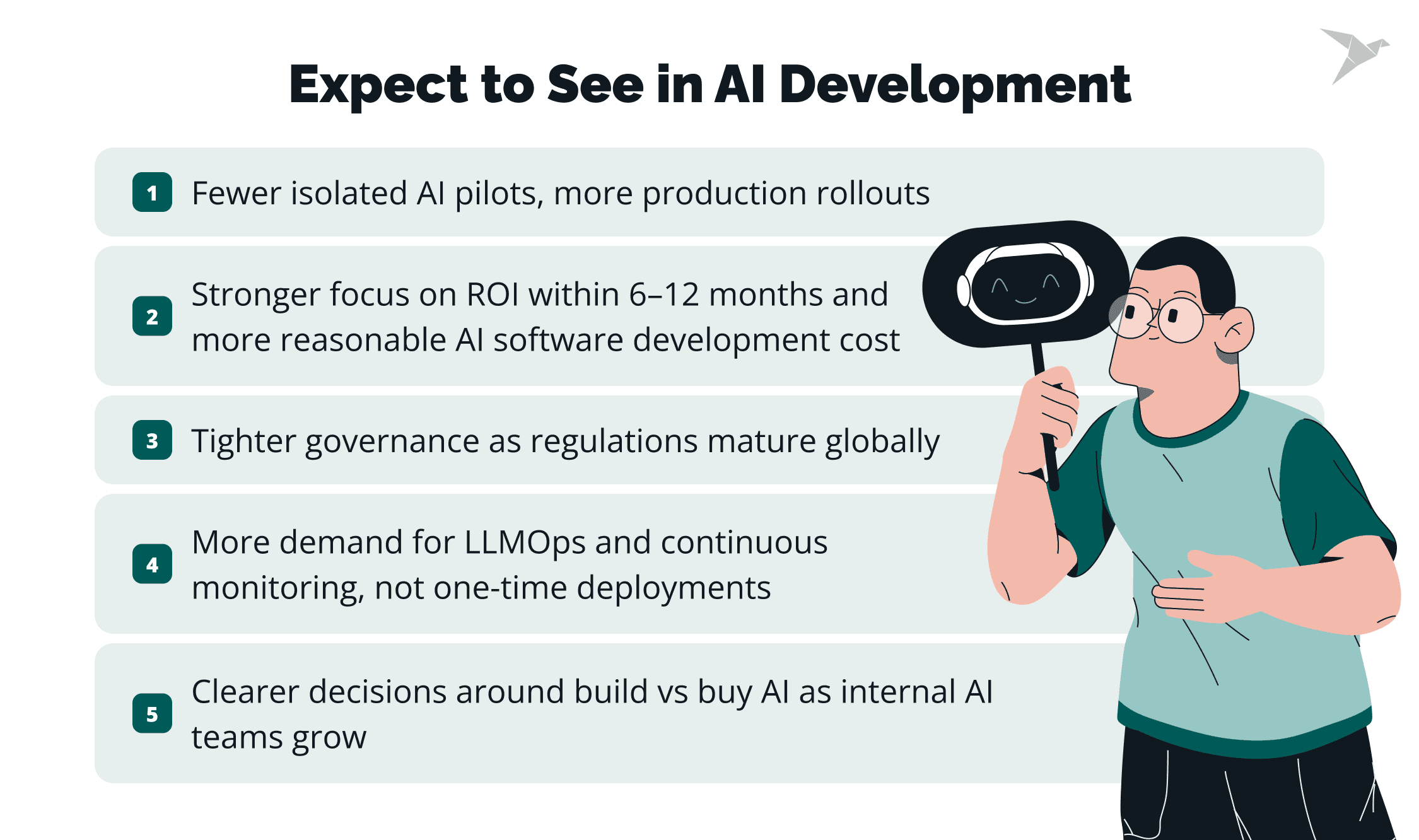

AI partnerships are entering a new phase. In 2025 and 2026, the market is shifting from experimentation to accountability. Most mid-size and enterprise companies have already tested AI in some form.

Recent industry reports show that while over 70% of organizations are piloting or using generative AI, fewer than one-third report significant financial returns so far. At the same time, global AI investment continues to grow at double-digit rates year over year. The gap between spending and outcomes is becoming impossible to ignore.

This changes how you should think about partners. The winners in 2026 will be the ones who deliver stable systems tied to real KPIs. Expect to see:

- Fewer isolated AI pilots, more production rollouts.

- Stronger focus on ROI within 6–12 months and more reasonable AI software development cost.

- Tighter governance as regulations mature globally will shape how teams choose and use AI technologies, and why an AI development partner matters more than ever.

More demand for LLMOps and continuous monitoring, not one-time deployments, will push companies to integrate AI into production with reliable pipelines, clear ownership, and ongoing support.

Clearer decisions around build vs buy AI as internal AI teams grow will depend on who can deliver custom solutions with technical excellence, not just demos. That includes strong model development, validated Machine Learning models, and clear proof from past projects that the AI company can ship and operate real systems.

Security and compliance will also move to the front. As AI regulations expand across the EU, the US, and Asia, traceability and risk controls will become baseline requirements rather than competitive advantages. These controls also help manage operational costs by reducing rework, incidents, and downtime.

At the same time, AI systems will become more embedded in daily workflows. Internal copilots, automation agents, and domain-specific models will shift from “innovation projects” to core infrastructure, including intelligent automation across core processes.

That means your AI partner must think like a long-term engineering collaborator, not a short-term experiment vendor, and be able to keep improving what’s already live.

Here’s the bottom line

- Choose outcomes over excitement, even when vendors claim they build the best AI.

- Choose measurable impact over model size, and prioritize what lowers risk and improves day-to-day performance.

- Choose discipline over speed alone, especially when you need production-grade delivery, monitoring, and ownership.

If you define success clearly, test expertise rigorously, and demand real safeguards, you’ll operationalize it safely and responsibly, with results that stand up to scrutiny in 2026 and beyond.

We are pleased to help

FAQ

Look for outcome focus. A strong partner starts with your business goal and defines clear success metrics. They cover the full path from data preparation to deployment and monitoring. They talk openly about risks, trade-offs, and constraints. And they can explain complex systems in plain language.

If you’re thinking about how to find an AI development partner, prioritize proven delivery, structured process, and measurable results over polished presentations.

Ask for proof from real deployments across similar AI projects. Review case studies with metrics tied to business impact and the ai solutions delivered. Speak with past clients about what happened after launch.

Run a technical deep dive with your engineering team to validate technical expertise in machine learning and natural language processing. If possible, start with a small pilot using your data to test custom AI solutions in real conditions. Production readiness shows up in monitoring, versioning, security controls, and post-launch support.

It depends on your timeline, internal expertise, and strategic goals. If AI is core to your product and you have strong data and engineering teams, building in-house can make sense.

If you need speed, specialized expertise, or help de-risking early stages, an experienced AI software development partner with a proven track record in custom software development and AI innovation can accelerate delivery and avoid costly mistakes. Many companies start with a partner, then gradually expand internal ownership as systems mature.

Outsourcing is often more cost-effective in the short term and helps you get to market faster. Working with an AI software development team can lower operational costs and speed up delivery even further, since you get immediate access to specialized expertise and ready-to-implement AI components without the time and expense of building an in-house team.

Building in-house can pay off over the long run, but it usually makes sense only when you have enough ongoing AI work to keep the team consistently utilized.

We offer three models. With a Dedicated Team, we build a team that works only on your project and can scale up or down as your needs change, so you get steady delivery without hiring overhead. With Fixed Price, we agree on requirements, deliverables, timeline, and cost upfront, which works best when the scope is clear, and you want a predictable budget.

With an R&D Center, we set up a long-term AI unit for ongoing research, testing, and product improvements, which is ideal when you need continuous experimentation rather than a one-time build.

TechMagic Academy

TechMagic Academy